A Guide to Diagnosing High CPU Utilization in Postgres RDS

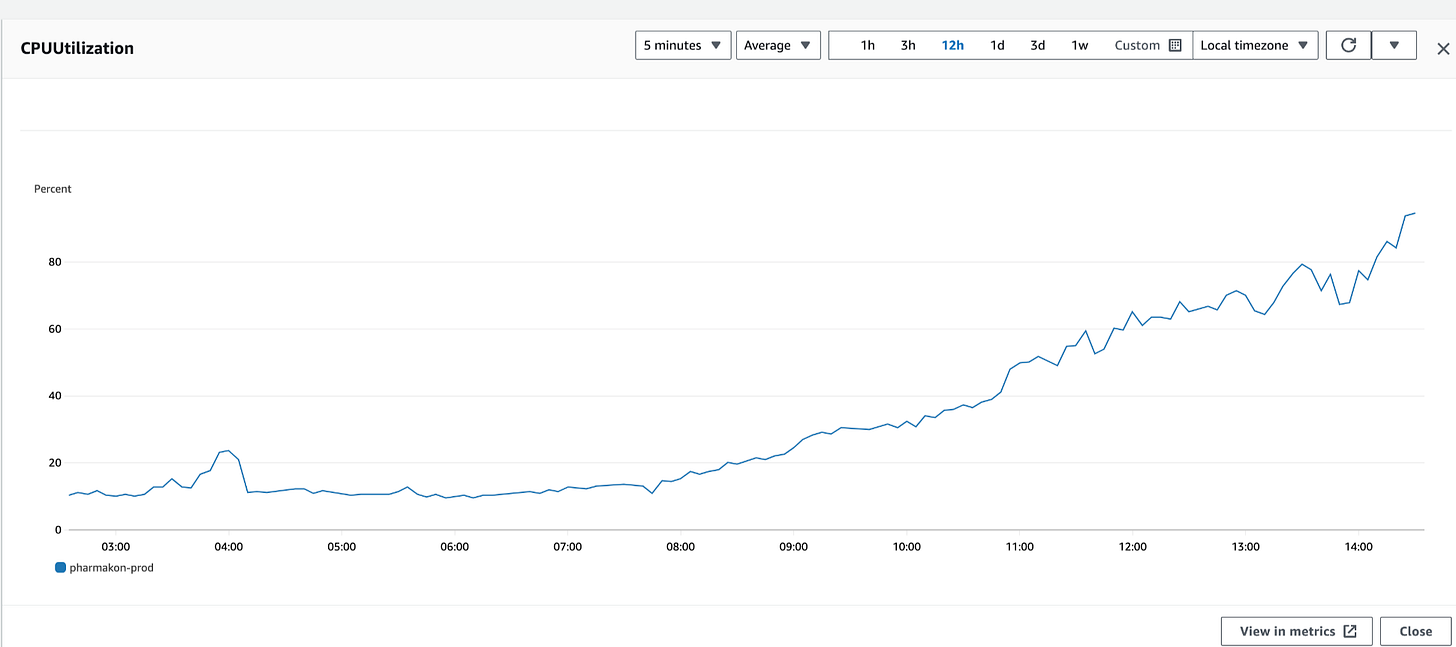

RDS (Relational Database Service) is a highly managed, reliable database service, but occasionally, performance issues arise. One such common issue is when the RDS Postgres database’s CPU gets pinned to 100%, causing significant system slowdowns or even outages. Last week, our team faced this issue. After a series of investigations, we were able to resolve it. This blog will walk through the steps to identify and resolve the CPU-pinning issue in RDS.

What is a CPU-Pinned Issue?

First of all, let’s talk about what a CPU-pinned state means. CPU-pinning occurs when the CPU usage of your RDS instance spikes and stays close to 100% for an extended time. This can lead to query latency, slow response times, connection issues, and potential service outages. CPU pinning occurs when one or a few specific processes or queries monopolize the CPU resources of an instance or server, driving the CPU usage to 100% or close to it for extended periods. In this context, "pinning" doesn't mean the operating system assigning processes to specific cores (as in core pinning) but refers to the processes hogging all available CPU resources on the entire instance. It can cause system slowdowns, prevent new processes from running, and lead to system unresponsiveness.

True CPU Pinning and Login Issues:

In some severe cases, CPU pinning can prevent you from even logging into the database. When CPU utilization reaches 100%, connections might time out or fail, making it difficult to interact with the database directly. In these situations, it’s important to attempt to connect with minimal permissions or through monitoring tools like AWS CloudWatch to gather insights. If login remains impossible, consider using emergency measures such as reducing traffic or rebooting the instance during a maintenance window. ( Updated: As per feedback from reader )

What Caused CPU-Pinning in our case ?

After some investigation ( steps shown below ) we found that the root cause of this problem was query in our database was consuming excessive CPU resources on a particular RDS instance, leading to CPU pinning on that instance. Turns out our, application database connections were long lived and persist on the same instance where they were initially established. This means that subsequent queries from the same connection will be executed on the same instance

Since it was in the middle of the day, rolling over pods to restart the connection was not an option. Here are the steps we followed to resolve the issue.

( Update: As per feedback from reader, added image showing RDS connections being pinned to a particular replica instead of being distributed between different replicas. )

Step 1: Check and Analyze Query Performance

The first step in resolving the CPU-pinning issue is identifying the problematic queries causing high CPU usage. Use PostgreSQL's pg_stat_activity to check for long-running or locked queries that could be hogging resources.

You can run the following query to get a list of active queries and their details:

SELECT * FROM pg_stat_activity

WHERE

xact_start BETWEEN '<start timestamp>' AND '<end timestamp>'

AND username = '<readonly_user>';This query will show you all active transactions, including long-running and locked queries. Pay particular attention to transactions running for a long time or stuck in a wait state.

Step 2: Check for Long-Running or Locked Queries

One of the primary culprits for high CPU usage is long-running or locked queries. These queries consume resources and can cause other queries to wait or fail due to resource contention.

To address this, check the pg_stat_activity table to identify these queries.

SELECT pid, now() - query_start AS duration, usename, state, query

FROM pg_stat_activity

WHERE state = 'active'

AND now() - query_start > interval '5 minutes'

ORDER BY duration DESC;pid: Process ID of the query.

duration: How long the query has been running.

usename: The user running the query.

state: The current state of the query (e.g., 'active', 'idle').

query: The SQL query itself.

Once you've identified a long-running query, you can use EXPLAIN ANALYZE to understand how PostgreSQL is executing it and why it might be slow. This command provides a detailed breakdown of the query execution, including where time is being spent.

EXPLAIN ANALYZE <your_query_here>;For example, if the long-running query is:

SELECT * FROM large_table WHERE condition = 'value';You can analyze it using:

EXPLAIN ANALYZE SELECT * FROM large_table WHERE condition = 'value';If you find any locked or long-running queries that aren't essential, you can terminate them using pg_cancel_backend or pg_terminate_backend:

Cancel a query (less disruptive):

SELECT pg_cancel_backend(<pid>);Terminate a backend process (more forceful):

SELECT pg_terminate_backend(<pid>);Be cautious when terminating queries as it may interrupt essential services. Review the query before taking action.

Step 3: Drop Redundant Indexes

While investigating performance issues, you may discover redundant or leftover indexes that were created during failed REINDEX operations or unnecessary optimizations. These extra indexes can degrade performance by increasing CPU and memory usage.

To clean up redundant indexes, follow these steps:

Identify the indexes: Use pg_indexes to list all indexes for your table:

SELECT indexname, indexdef FROM pg_indexes WHERE tablename = '<your_table>';Drop the unnecessary indexes: Once identified, you can drop redundant indexes using:

DROP INDEX IF EXISTS <index_name>;For example:

DROP INDEX IF EXISTS redundant_index_1;If you need to drop multiple indexes at once, you can use a transaction:

BEGIN;

DROP INDEX IF EXISTS index_1;

DROP INDEX IF EXISTS index_2;

COMMIT;Cleaning up unnecessary indexes will free up system resources, reduce maintenance costs, and improve query performance.

Step 4: Perform Maintenance with VACUUM or pg_repack

Another common reason for high CPU usage is database bloat—this happens when tables and indexes grow inefficiently due to heavy updates or deletes. Use VACUUM to reclaim storage space and optimize performance.

VACUUM helps reduce the size of tables and indexes by removing dead rows left behind by updates or deletes.

VACUUM FULL;pg_repack is a more comprehensive tool than

VACUUMthat rebuilds tables and indexes, providing more space savings and performance gains without significant downtime. It re-generates the table by copying it over a new one along with its related objects, which helps reclaim the wasted space.pg_repack -d <database_name>

Running these commands can significantly improve performance, especially in systems with frequent write operations.

Note: Be aware that VACUUM FULL locks tables, so use it during low-traffic periods or consider pg_repack if you need to avoid downtime.

Step 5: Redeploy Application to Terminate Hung Queries

In some cases, queries could be hung due to application logic or bugs in the recent deployment. If you've exhausted other methods and the CPU remains pinned, consider redeploying your application through your CI/CD pipeline. This may help terminate any hung or stuck queries.

While redeploying can be a quick fix, be sure to investigate the root cause of any problematic queries or logic in your application.

Step 6: Reboot the Database as a Last Resort

If all else fails and your RDS instance remains unresponsive or the CPU is still pinned at 100%, you may need to reboot the database. Rebooting clears all connections and may resolve the issue, but this should be treated as a last resort.

Warning: Rebooting your RDS instance will cause downtime. Only proceed if you are facing a complete outage or major issues that prevent the system from functioning correctly. Always ensure this step is done with proper coordination and during a maintenance window if possible.

Preventing Future CPU-Pinning Issues

To avoid future CPU-pinning issues, implement proactive measures such as:

Regularly optimizing query performance.

Setting up autoscaling for RDS instances.

Distributing heavy workloads across multiple databases or read replicas.

Using automated failover mechanisms to reduce downtime during high CPU utilization.

Monitoring query performance and database resource consumption regularly will also help you catch potential issues before they escalate.

A CPU-pinned issue in RDS can seriously impact the performance and availability of your system, but with the right approach, you can mitigate it effectively:

Analyze and optimize query performance by checking long-running queries in

pg_stat_activityand usingEXPLAIN ANALYZE.Terminate locked or long-running queries using

pg_cancel_backendorpg_terminate_backend.Monitor analytics queries to ensure they don’t cause high CPU usage.

Run VACUUM or pg_repack to reduce database bloat and reclaim space.

Redeploy the application to terminate hung queries if necessary.

Reboot the database only as a last resort when all other methods have failed.

By following these steps, you can resolve high CPU usage issues and ensure your RDS instance continues running smoothly.

References

https://manuel.bernhardt.io/posts/2023-11-16-core-pinning/

https://en.wikipedia.org/wiki/Processor_affinity

https://www.linkedin.com/advice/1/what-advantages-disadvantages-using-cpu-affinity

https://docs.redhat.com/en/documentation/red_hat_enterprise_linux_openstack_platform/7/html/instances_and_images_guide/ch-cpu_pinning

https://github.com/concourse/concourse/issues/6589

https://www.cockroachlabs.com/blog/high-cpu-usage-postgres/

https://dba.stackexchange.com/questions/44084/troubleshooting-high-cpu-usage-from-postgres-and-postmaster-services